Backplain announces General Availability of its AI Control Platform for Small and Medium Business (SMB)

The first easy-to-use, affordable solution specifically designed to provide secure, productive, side-by-side use of multiple Large Language Models to SMBs.

The first easy-to-use, affordable, solution specifically designed to provide secure, productive, side-by-side use of multiple Large Language Models to SMBs.

"Small business owners expect to save more than $4,000 and 300 hours of work this year using generative AI… we're at the tip of the iceberg when it comes to what's possible with GenAI." — Amy Jennette, Senior Director of Marketing at GoDaddy

SAN DIEGO, CA — Both small and medium sized organizations (small <100 employees, <$50M in revenue; medium 100-999 employees, $50M-$1B in revenue as defined by Gartner) are falling behind in leveraging the productivity gains that come with using Generative AI due to smaller budgets and fewer resources or lack of in-house expertise to be able to test, integrate and use the technology both securely and effectively.

The answer — Backplain.

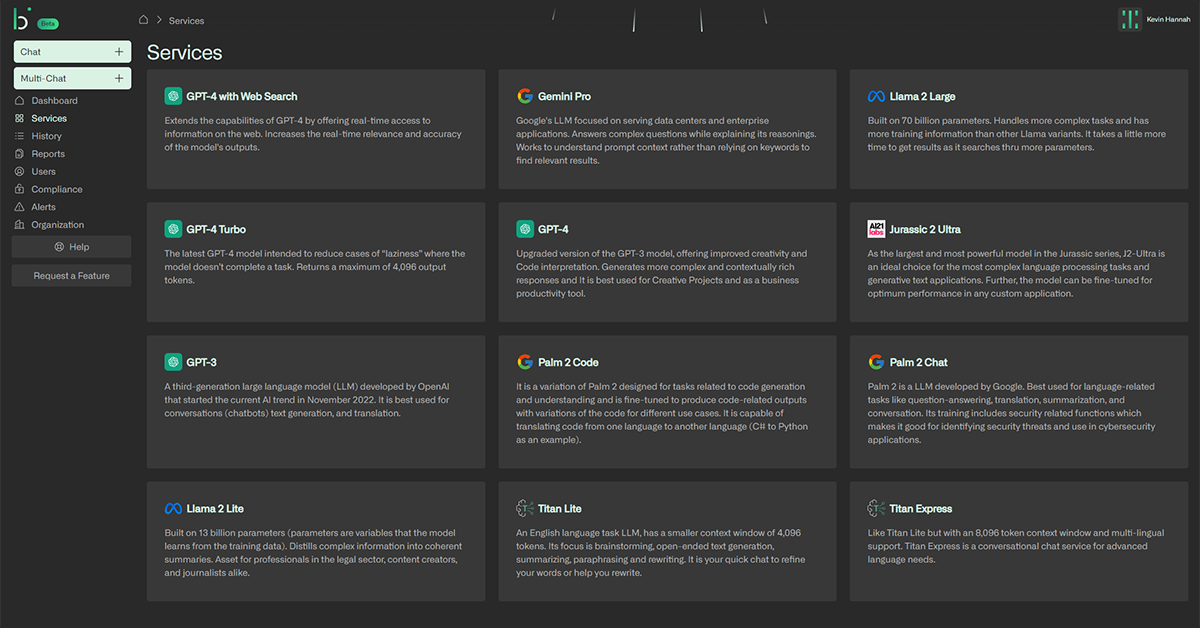

- Provides users with choice, so they do not need to rely on any single Large Language Model (LLM), which for the organization, translates into no vendor lock-in. Currently including 12 models with more added each month.

- Supports any combination of public, private, proprietary, and open source LLMs deployed either on-premises, in private cloud, or public cloud as organizational context dictates.

- Allows users to perform multi-model comparison and experimentation from a single, simple, user interface. Users can easily see which models return the "best answer".

- Enables deployment, orchestration, and replacement of LLMs to match organization needs; everything is changing with the introduction of Generative AI so there is need to quickly tap into rapidly moving advancements.

- Provides the enterprise guardrails that IT needs to support the safe and secure use of ChatGPT, etc., ending the potential for unsanctioned Shadow AI. With IT as an enabler not a blocker, departments can more easily overcome C-suite security objections to their use of Generative AI.

- Supports Trust, Risk, and Security Management (TRiSM) with ModelOps, proactive data protection, AI-specific security, and risk controls for both inputs and outputs.

- Filters and masks to ensure only the information the organization dictates is allowed outside of control.

- Provides reportable, auditable, model use so organizations can feel confident they know how Generative AI is being used and how much it costs.

- Includes "compliance checks" by incorporating Human-in-the-Loop (HITL) as part of publishing workflows, providing the ability to validate Generative AI output.

- Acts as an organization's own independent AI watchdog.

- Supports query input into multiple LLMs simultaneously and then subsequent comparison of the result outputs — often the simplest method for users to self-identify potential hallucinations.

- Users can rate query success so the system itself can learn what makes a good query vs. a bad query.

- Continually develops methods to help improve queries through prompt engineering, and the sharing of query construction, success, failure, and improvement in the form of Prompt Notebooks.

- Retrieval-Augmented Generation (RAG) services are available. By building a vector database from an organization's own data, relevant documents are retrieved and used to augment the input context.

- Data preparation services are available to ensure the quality of data required for effective RAG, or for an organization's own Fine-tuned Private LLM. Models are good, data is better.

Commenting on what was learned during the 6-month beta, CEO Tim O'Neal said, "It is being able to present users with answers to the same query from multiple LLMs side-by-side that has been the feature that end users, especially in legal and HR, just love! Helping users be productive while IT is happy their data is secure appears to be our secret sauce..."